Have you ever faced the necessity to load data from multiple CSV files into multiple database tables? Not so uncommon, right? In this article, we share a step-by-step tutorial on how to perform an automatic bulk insert from the CSV files. The script examples are provided too.

In the tutorial, we will be using the Data Pump for SQL Server tool which is a SQL Server Management Studio add-in for filling SQL databases with external source data and migrating data between systems. It supports the command-line interface, which gives the user richer control over the tool’s functionality and allows automating and scheduling regular data import and export tasks.

Contents

- What is the CSV format and why is it so popular?

- How to import multiple CSV files into multiple SQL Server tables in one go

- Conclusion

What is the CSV format and why is it so popular?

Comma-separated values (or CSV) format is extremely widespread today. It was designed to store and manage data without difficulty. CSV files are plain-text files that are easy to create, read, and manipulate. In fact, CSV is a delimited text file that uses a comma to separate values. Since CSV files typically store tabular data in plain text, they’re simpler to import into databases. CSV parsing is easy to implement, this format can be processed by almost all the applications, it better organizes large amounts of data. All these advantages make the CSV format so popular today.

How to import multiple CSV files into multiple SQL Server tables in one go

As a SQL Server developer, analyst, or DBA, you may face situations when you need to insert data from more than one CSV file into more than one database table. Can you do it all at once and thus save a lot of time and effort? Let’s check.

Prerequisites

- Data Pump for SQL Server installed (In case you don’t have the tool on your machine, you can download it from our website).

- CSV files to be imported.

- Data import templates created with the help of Data Pump.

- The text file containing paths to the data import templates.

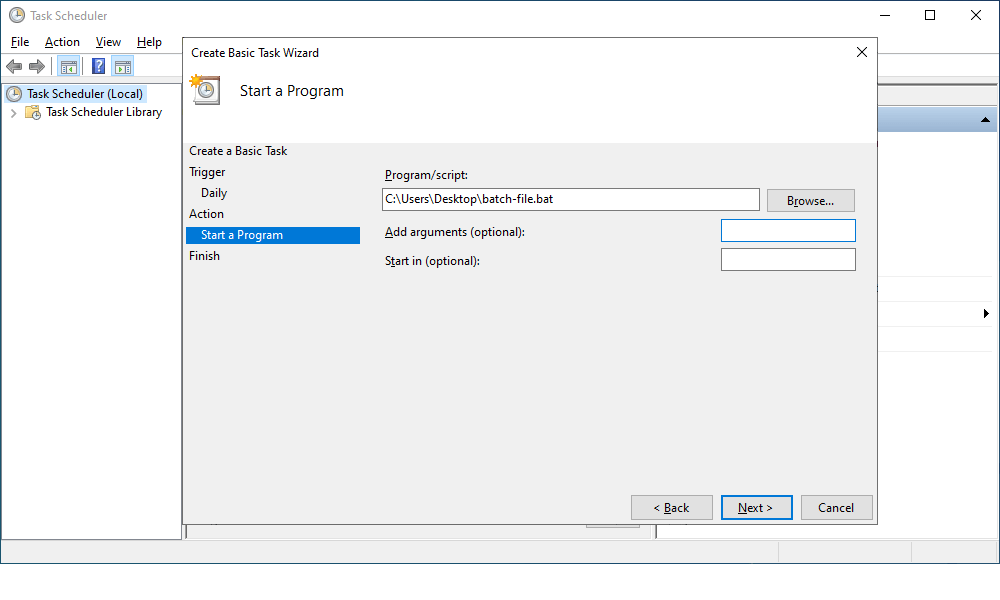

- A .bat file with the script to run the import job.

Step 1. Create data import templates

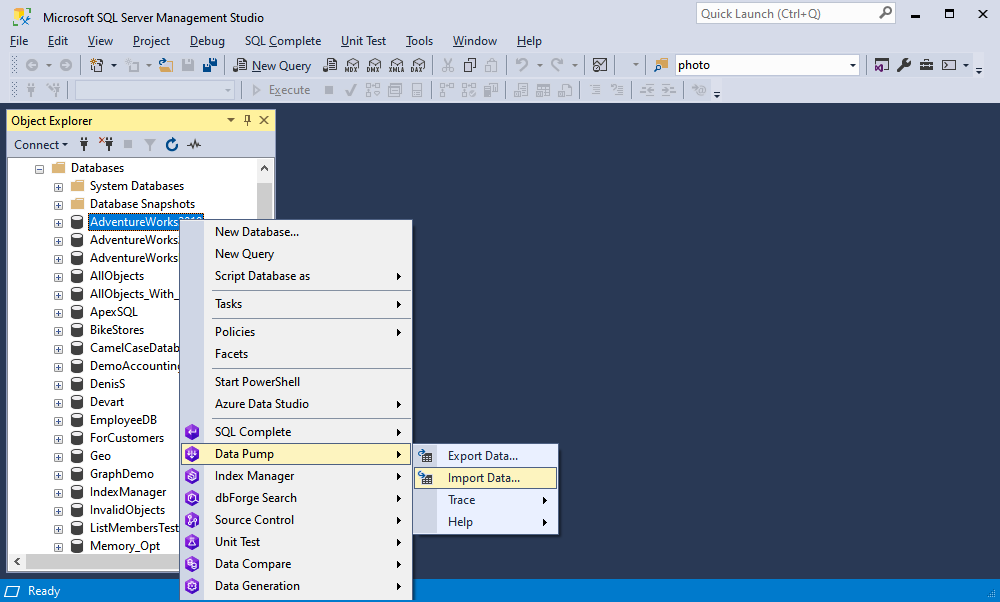

1.1 In Object Explorer, right-click the database you want to import data and select Data Pump -> Import Data.

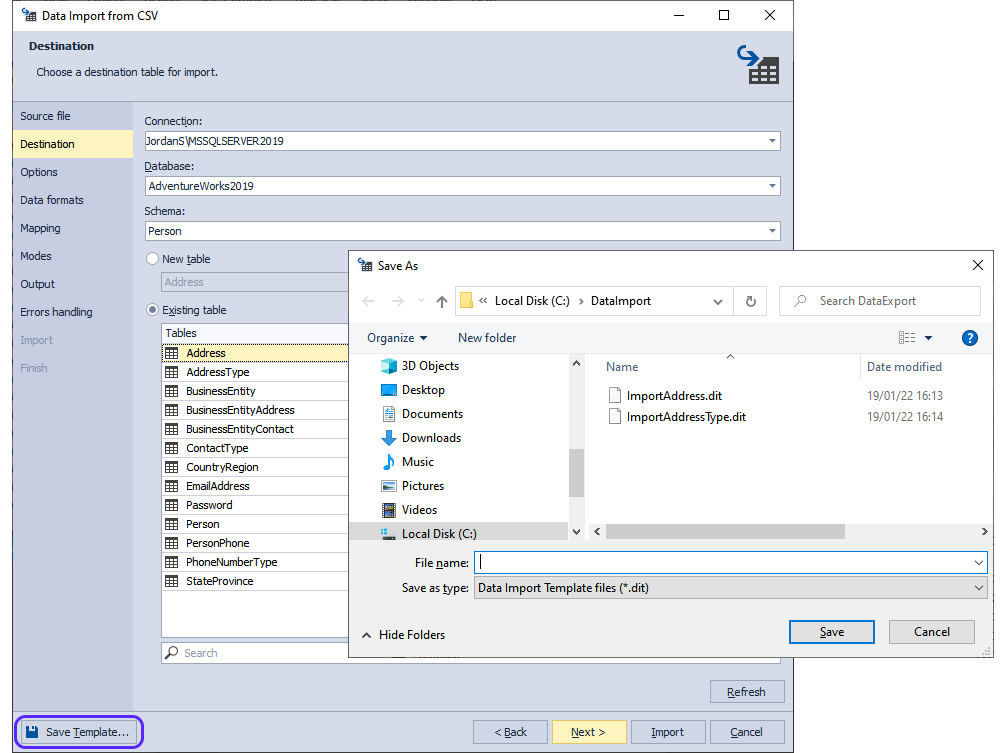

1.2 In the wizard that opens, select the file format to be imported—CSV in our case—and continue customizing the import process. Once done, click Save Template.

Step 2. Create a text file with the list of import templates

2.1 Launch any text editor tool, for example, Notepad.

2.2 Enter the names of the import templates. Here you can write as many templates as you need, just separate them with a comma. In our worked example, the file contents look as follows:

C:\DataImport\ImportAddress.dit,

C:\DataImport\ImportAddressType.dit2.3 Save the file. We save the file as Files.txt.

Step 3. Create a .bat file to run the import job

3.1 Launch any text editor tool, for example, Notepad.

3.2 Enter the script for launching the data import process like in the examples below. Don’t forget to change the variables.

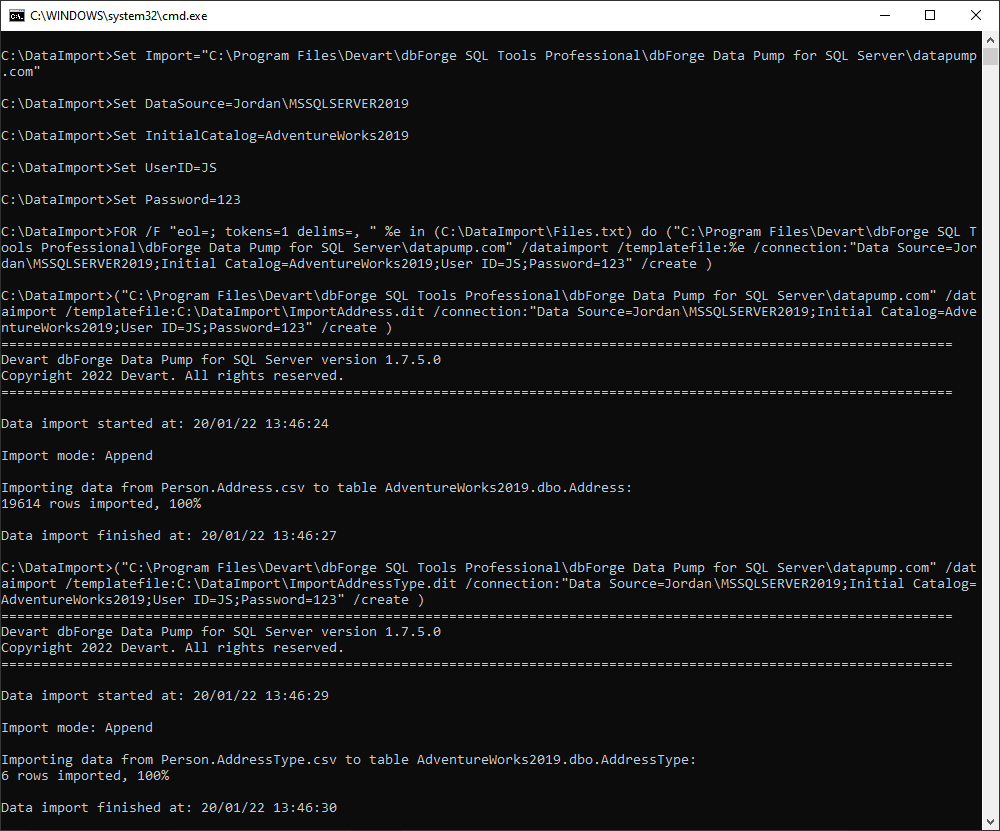

Set Import="C:\Program Files\Devart\dbForge SQL Tools Professional\dbForge Data Pump for SQL Server\datapump.com"

Set DataSource=%DataSource%

Set InitialCatalog=%InitialCatalog%

Set UserID=%UserID%

Set Password=%Password%"

FOR /F "eol=; tokens=1 delims=, " %%e in (C:\DataImport\Files.txt) do (

%import% /dataimport /templatefile:%%e /connection:"Data Source=%DataSource%;Initial Catalog=%InitialCatalog%;User ID=%UserID%;Password=%Password%" /create

)

pauseNote: Set Import is a default installation path for dbForge Data Pump for SQL Server. However, if you have changed it, you will need to specify the correct path to the required tool’s .com file as well.

3.3 Save the file.

Step 4. Populate the database from CSV files via the command line

Now, all you need to do is execute the .bat file via the command line.

Step 5. Schedule a bulk insert from the command-line

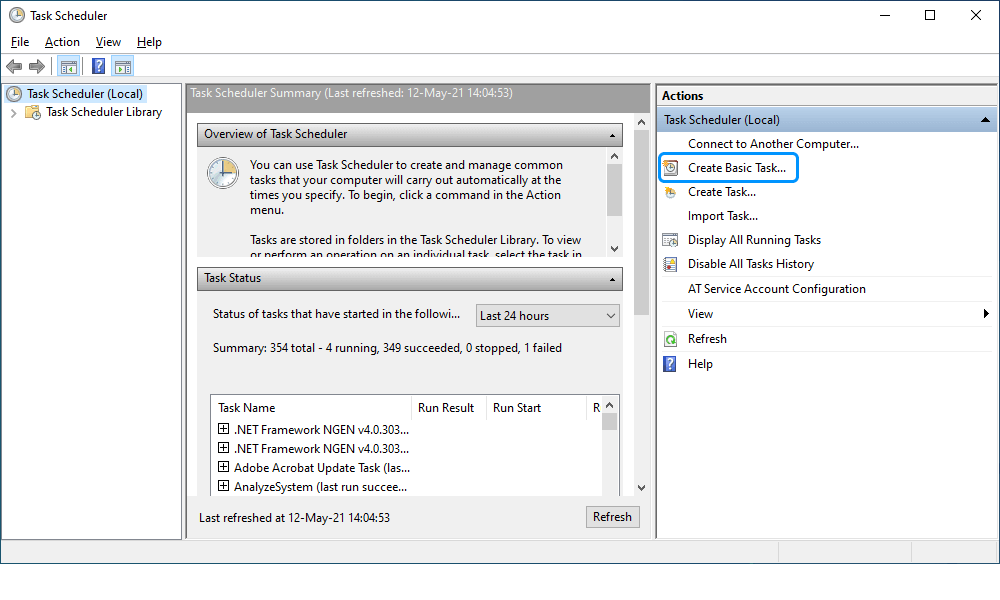

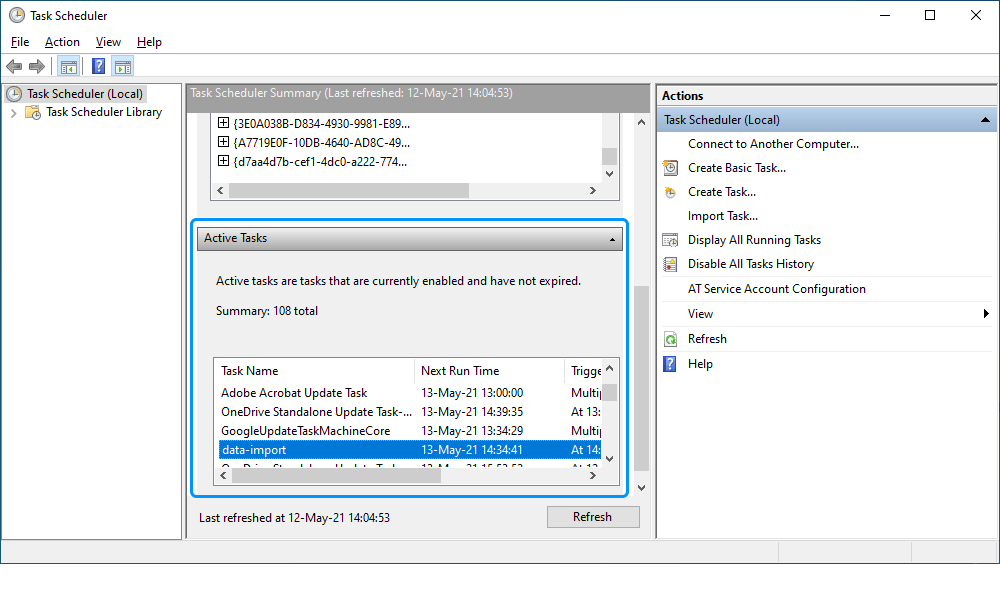

After the batch file for database data import has been created, you can proceed with the creation of the import task using the Windows Task Scheduler so that the job could be carried out automatically.

5.1 Open the Control Panel > Administrative Tools and select Task Scheduler.

5.2 In the Task Scheduler dialog that opens, navigate to the Actions pane and click Create Basic Task to create a scheduled task.

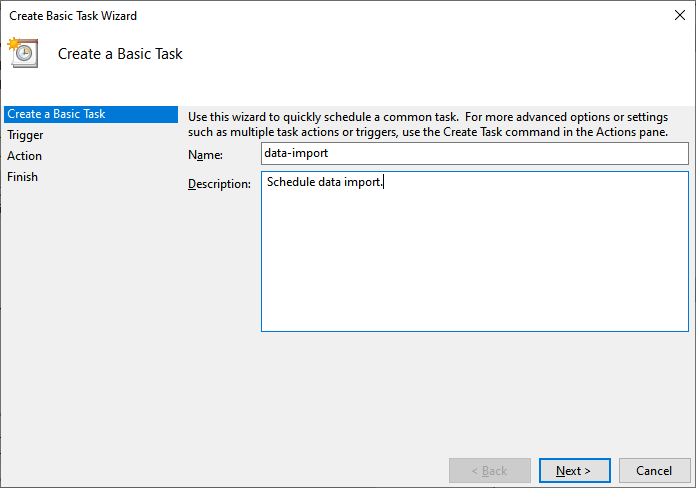

5.3 In the Create Basic Task Wizard dialog that opens, provide the name and description of the task and click Next.

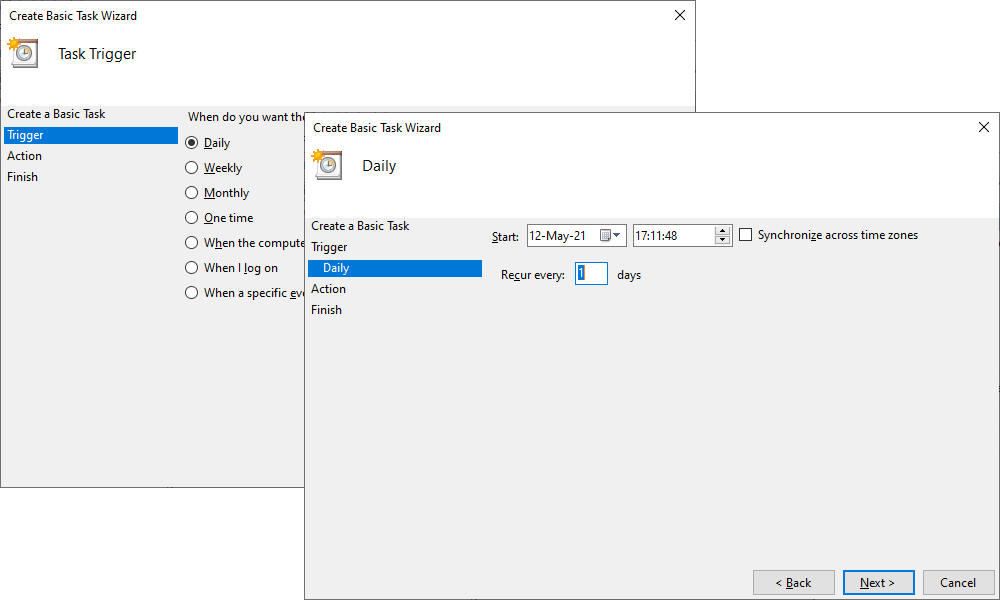

5.4 On the Trigger tab, choose when to launch the task and then click Next.

- Schedule based on the calendar: Daily, Weekly, Monthly, or One time. For this, specify the schedule you want to use.

- Schedule based on common recurring events: When the computer starts or When I log on.

- Schedule based on specific events: When a specific event is logged. For this, specify the event log, source, and event ID using the drop-down lists.

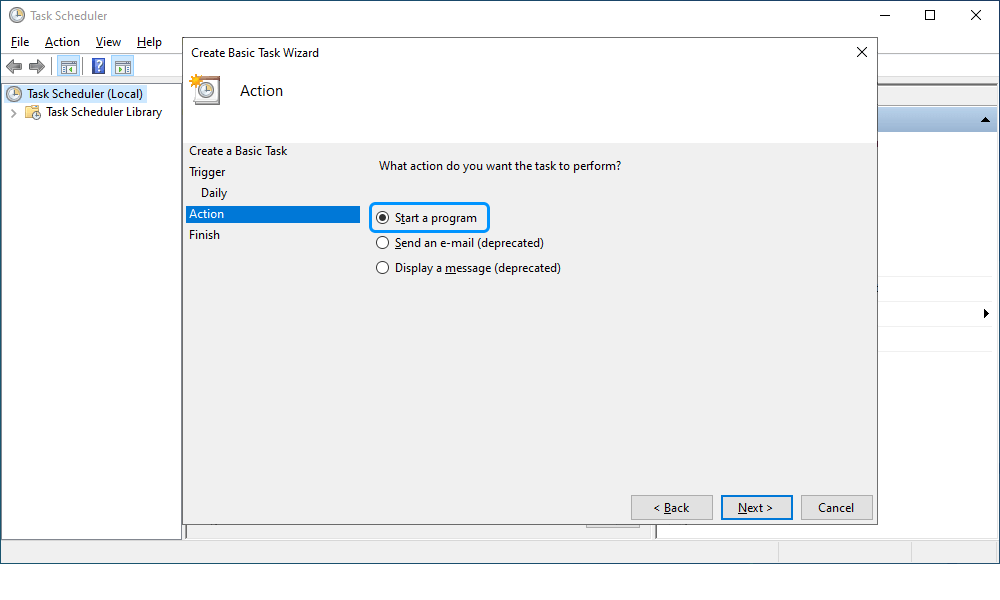

5.5 On the Action tab, click Start a program to schedule a program to start automatically and then click Next.

5.6 On the Action tab, click Start a program to schedule a program to start automatically and then click Next.

5.7 On the Finish tab, verify the settings and click Finish. The task will appear in the Active Tasks section.

Conclusion

dbForge Data Pump for SQL Server supports a command-line interface (CLI) thus allowing the user to perform data import and export tasks from the command line, as well as schedule and automate the population of SQL Server databases with data. This article provides a worked example and a CLI script for running data import from multiple CSV files into multiple database tables. Download Data Pump and try the given scenario—you will see how simple the task can be.