Accidentally deleting files or experiencing data loss might be frustrating, especially when it is important data. Fortunately, it is possible to recover deleted files, and in this article, we will explain how to achieve this with the help of redo and undo scripts generated using dbForge Transaction Log. In addition, we’ll review some basics of ACID properties in transactions and best practices for rollback operations.

Contents

- Understanding ACID properties in transactions

- Preparing for data recovery

- Steps to recover accidentally deleted data

- Using dbForge Transaction Log for rollback

- Best practices for rollback operations

Understanding ACID properties in transactions

The MS SQL Server database management system suggests that all changes are carried out within transactions. A transaction is a logical unit of work that accesses database data through read-and-write operations. Transactions can also be used for schema versioning and managing referential database data, such as during migrations.

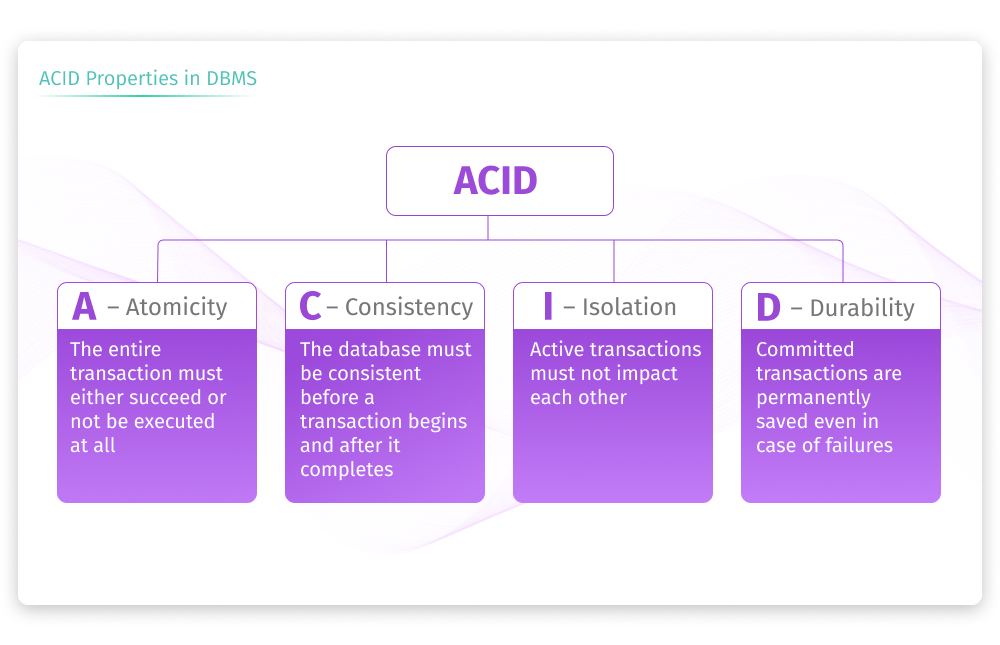

To maintain data consistency and database integrity, it’s crucial to apply the ACID properties before a transaction begins and after it is completed. ACID stands for atomicity, consistency, isolation, and durability.

The following image represents the ACID properties:

Atomicity ensures that all operations within a transaction are treated as a single unit and that all either succeed or fail. So, in case of any unexpected behavior, unwanted error, or system failure, the database will remain in a consistent state, and the transaction will be rolled back.

Consistency guarantees that all changes made within a transaction are consistent with database constraints, rules, and triggers. If the data changes result in corruption or violation of these rules, the transaction is aborted or fails.

Isolation allows multiple transactions to run concurrently in different environments, thus ensuring that they do not interfere with each other.

Durability ensures that all changes within a committed transaction become permanent and won’t be lost, even in the event of unexpected occurrences like power failures.

Preparing for data recovery

Since data loss may occur due to viruses, power outages, or accidental damage, and no one is safe from this, it is important to secure data storage to be able to recover it if needed. This can be achieved using backups or snapshot isolation methods.

A backup is a copy of your data. So, when you create a backup, you “archive” a single or multiple files of your database data that will be kept in an isolated location. However, over time the backup may no longer align with the current database state.

Snapshot isolation allows for a consistent view of the database to maintain concurrency control and data integrity in a multi-user database environment.

In addition to backup or snapshot isolation approaches, the preparation for data recovery may involve the following steps:

- Identify the potential data loss or data affected

- Check the availability and integrity of backups and determine if they are suitable for recovery

- Take measures to prevent data loss or corruption

- Develop a recovery plan

- Document the recovery process, actions taken, and the changes made

- Test recovery methods to ensure that the data can be successfully restored

- Ensure that the recovery process adheres to security protocols and protects sensitive data

Steps to recover accidentally deleted data

Now, let’s view some possible steps to recover accidentally deleted data that generally apply to database systems supporting transaction logs and rollback operations:

Identifying the data loss incident

Start with identifying what data and when it was accidentally deleted.

Generating and exporting undo scripts

Generate undo scripts or statements that can revert the database to the state before the deletion occurs. These scripts typically consist of SQL statements such as DELETE, UPDATE, or INSERT with the necessary values to undo the accidental changes.

Performing the rollback

Execute the generated undo scripts or statements to roll back the database to the state as it was before the data loss incident. This process involves running SQL commands to restore the deleted data or revert the changes made in the accidental deletion.

Using dbForge Transaction Log for rollback operations

Transaction logs in a database record all changes made to the data, and monitoring them is crucial for database performance and maintenance. Transaction logs might grow for the following reasons:

- The more active and long-running transactions are, the larger the log can be.

- Operations with large data sets such as BULK INSERT, UPDATE, or DELETE can generate substantial log entries.

- If the transaction log is not regularly backed up, it can continue to grow indefinitely.

- Transactions that have not been committed or rolled back can prevent the log from being truncated, causing it to grow in size.

But what if we need to understand why the transaction log grows enormously? How can we review the records in the transaction log?

We’ve got a solution: dbForge Transaction Log, a SQL Server tool that helps read the transaction log as well as roll back selected transactions or generate scripts for them.

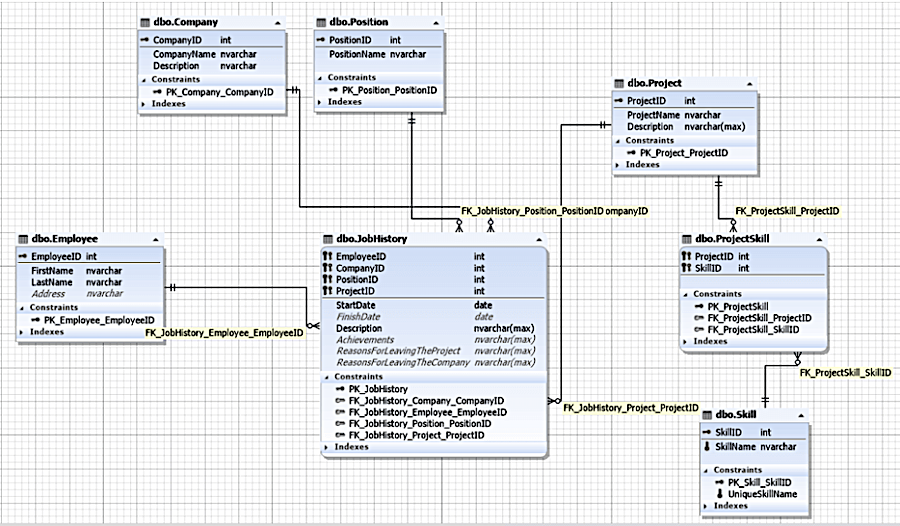

Let’s take an example: a JobEmplDB database for a recruitment service.

Reading the transaction log

To read the transaction log, follow the steps:

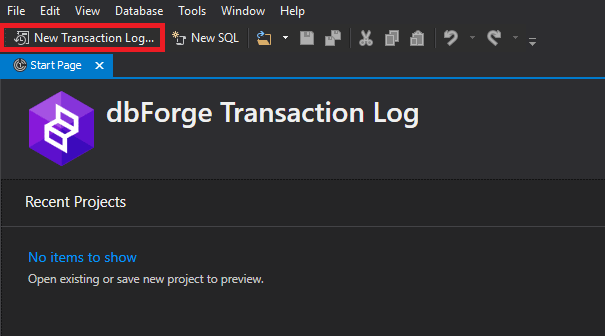

1. Open dbForge Transaction Log and click New Transaction Log Project on the toolbar.

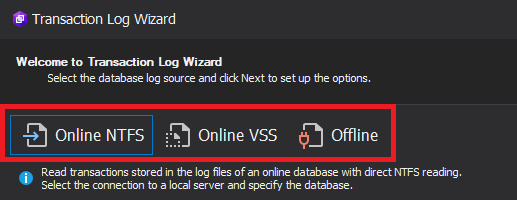

2. In the Transaction Log Wizard that opens, select the required mode for the transaction log.

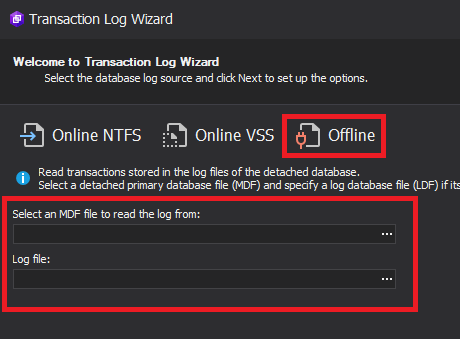

2.1. Offline mode is suitable for working with the transaction log of a detached database. On this page, select the data files and the transaction log files.

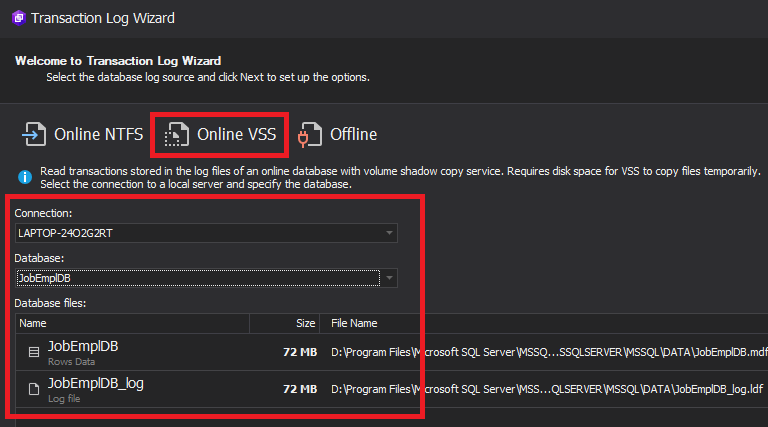

2.2. Online VSS mode works with a shadow copy of the database transaction log. On this page, select the connection and database.

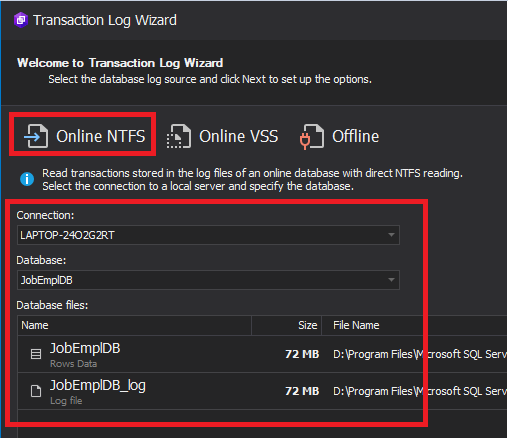

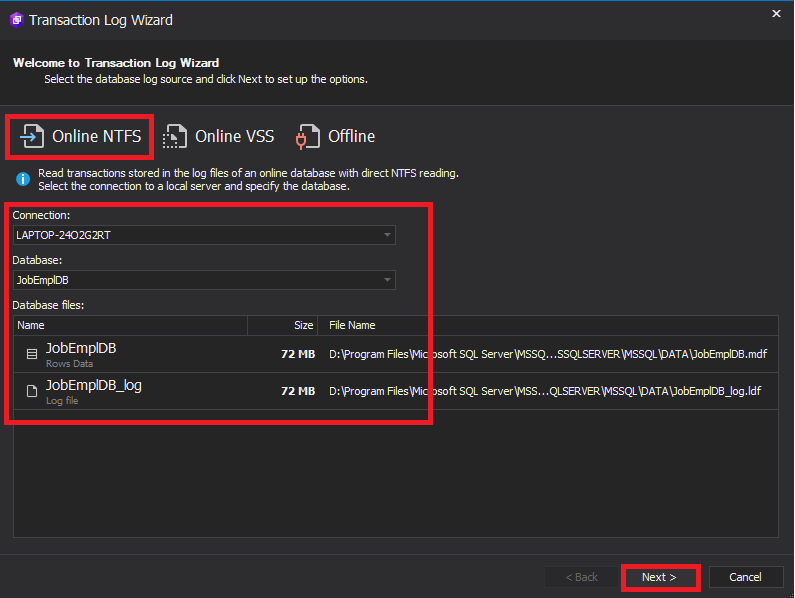

2.3. Online NTFS mode allows working with the transaction log of an online database (we also select the connection and database).

In our example, we select the Online NTFS mode and choose the necessary connection and database. Then, click Next in the bottom right corner of the wizard.

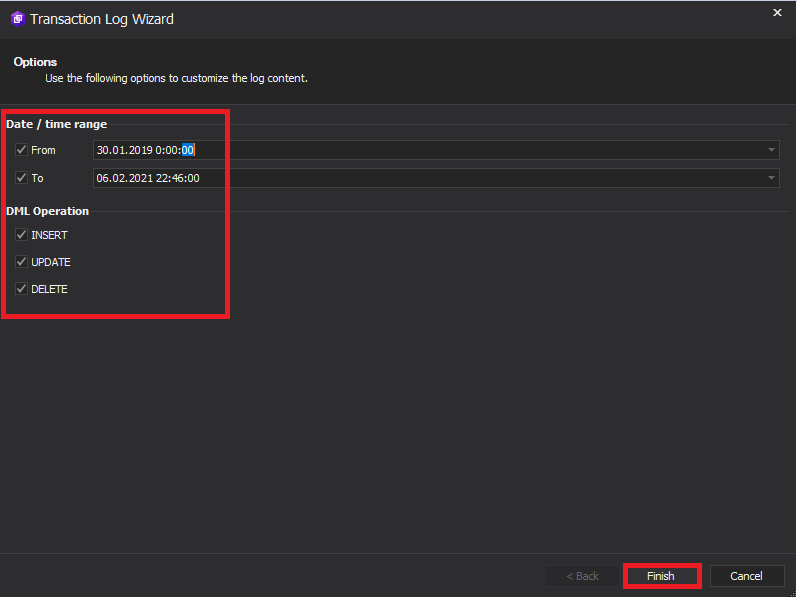

Then, select the appropriate parameters to filter the required transactions from the transaction log. In the filters, we can set the time and date range, as well as select the types of DML statements (INSERT, UPDATE, and DELETE).

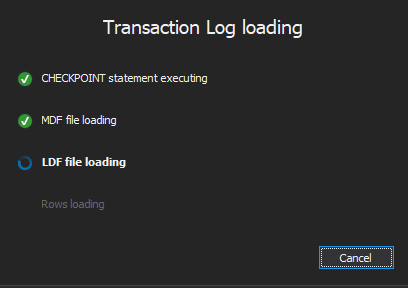

4. After configuring all the required filters, click Next in the bottom right corner of the screen to start data collection.

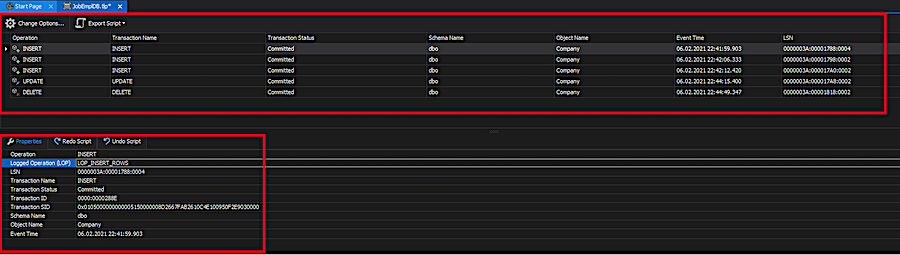

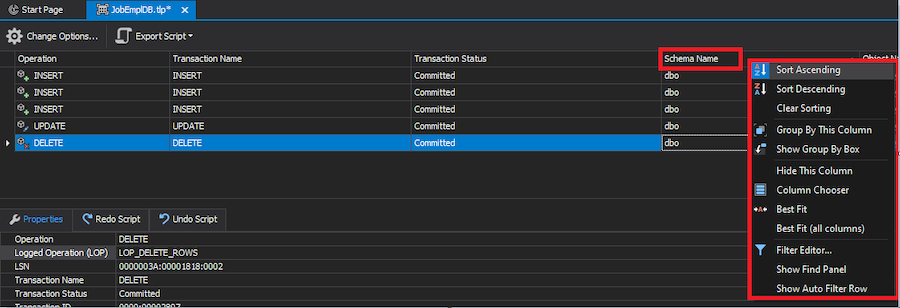

5. When the process is over, we see the window with filtered transactions triggered by DML statements. The list of transactions is on the top pane. The detailed information for each selected transaction is provided on the bottom pane.

Generating and exporting redo and undo scripts

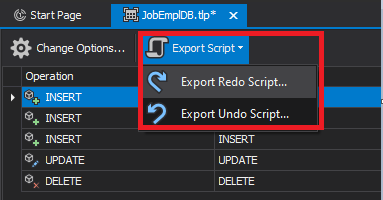

The Export Script menu has two options:

- Change Options adjusts filtering parameters for transactions

- Export Script generates undo/redo scripts for selected changes

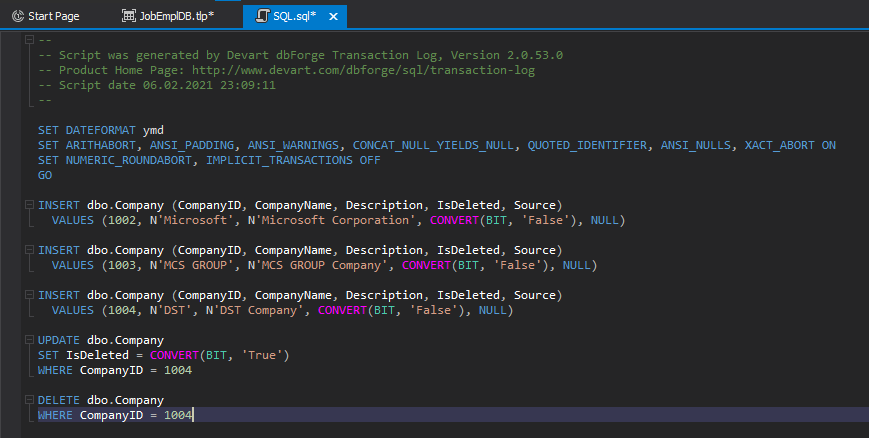

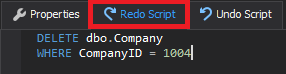

If we choose to export a redo script, we will see the following output:

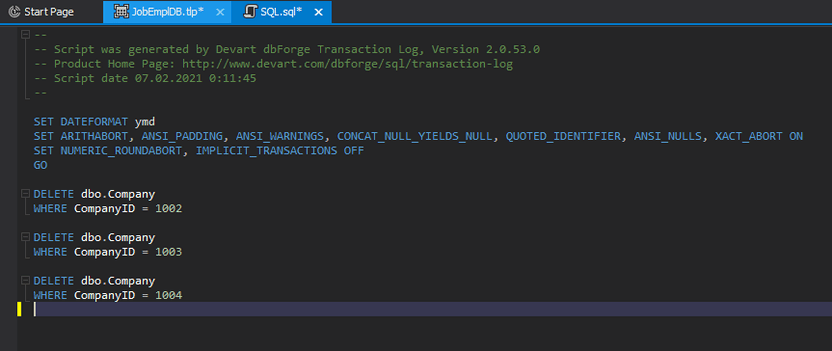

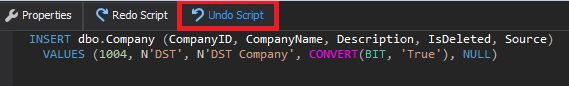

If we choose to export an undo script, we will get the following result:

These scripts apply to both rolling back the current changes and redoing changes in a similar database.

Note: To redo changes, the state of the database must allow execution of the script.

Now, let’s get back to the filtered transactions window and examine the table of selected transactions. We will see that we can perform sorting by any column. Also, the column menu offers a number of other operations, as shown in the following screenshot.

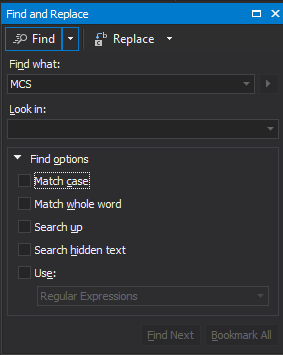

We can search through the selected transactions using CTRL+F:

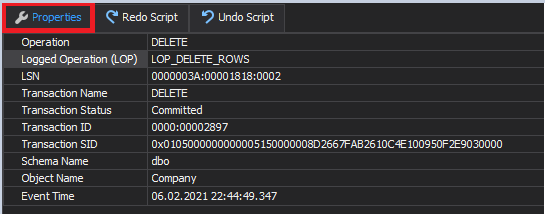

On the Properties tab, we can see the main properties of the selected transaction.

On the Redo Script tab, we can view the script to redo changes (the entire transaction code):

The Undo Script tab presents the script to undo changes. It is a kind of transaction rollback simulation.

Best practices for rollback operations

In conclusion, let’s identify the best practices for rollback operations that may be used in case of any unexpected issues:

Creating revert scripts for emergency recovery

- Develop and maintain revert or rollback scripts that can quickly and accurately undo changes made by specific transactions or updates.

- Ensure that the revert scripts are thoroughly tested and kept up-to-date as database schema and application logic evolve.

Audit trails and monitoring transaction logs

- Implement robust audit trails to track changes and actions within the database, such as recording who made changes, what changes were made, and when they occurred.

- Regularly monitor transaction logs for unusual or unexpected activity.

Regular testing of rollback scenarios

- Regularly conduct testing of rollback scenarios and emergency recovery procedures.

- Include different types of scenarios in your testing, such as accidental data deletions, data corruption, and application logic errors.

Conclusion

You might want to undo your last SQL query for a number of reasons, and this is how dbForge Transaction Log helps you do it easily. With this tool, you can read your transaction log quickly, even online, and without database shadow copying. When the transaction log keeps growing enormously, the tool helps reveal the reason why it does so. It is also helpful when you need to generate scripts to redo and undo selected changes for your DML operations.